Introduction

Overview

Teaching: 0 min

Exercises: 0 minQuestions

What does the acronym “FAIR” stand for, and what does it mean?

How can library services contribute to FAIR research?

Objectives

Articulate the purpose and value of making research FAIR

Understand that library services impact various parts of the research lifecycle

Goals of this lesson:

- To teach FAIRer research data and software management and development practices

- Focus on practical approaches to being FAIRer admitting that there are no “silver bullets”

Library services across the research lifecycle

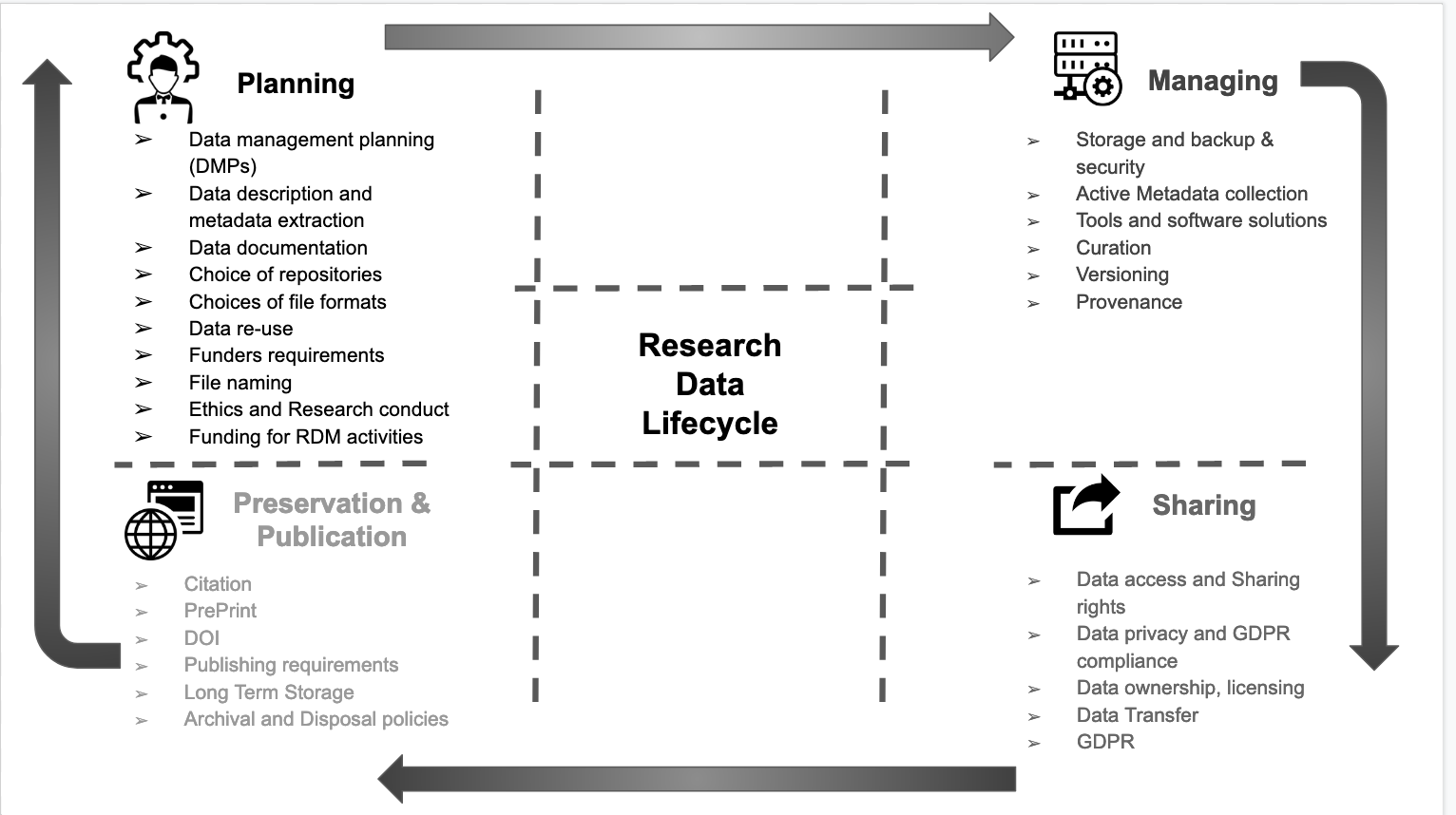

Libraries actively help researchers navigate the requirements, demands, and tools that make up the research data management landscape, particularly when it comes to the organization, preservation, and sharing of research data and software.

They play a vital role in directly supporting the academic enterprise by promoting data sharing and reproducibility. Through their research training and services, particularly for early career researchers and graduate students, libraries are driving a cultural shift towards more effective data and software stewardship.

As a trusted partner, and with an embedded understanding of their communities, libraries foster collaboration and facilitate coordination between community stakeholders and are a critical part of the discussion.

El-Gebali, Sara. (2020, September 29). Research Data Life Cycle. Zenodo. http://doi.org/10.5281/zenodo.4057867

FAIR in one sentence

The FAIR data principles are all about how machines and humans communicate with each other. They are not a standard, but a set of principles for developing robust, extensible infrastructure which facilitates discovery, access and reuse of research data and software.

Where did FAIR come from?

The FAIR data principles emerged from a FORCE11 workshop in 2014. This was formalised in 2016 when these were published in Scientific Data: FAIR Guiding Principles for scientific data management and stewardship. In this article, the authors provide general guidance on machine-actionability and improvements that can be made to streamline the findability, accessibility, interoperatbility, and reuability (FAIR) of digital assets.

“as open as possible, as closed as necessary”

FAIR brings all the stakeholders together

We all win when the outputs of research are properly managed, preserved and reusable. This is applicable from big data of genomic expression all the way through to the ‘small data’ of qualitative research.

Research is increasingly dependent on computational support and yet there are still many bottlenecks in the process. The overall aim of FAIR is to cut down on the inefficient processes in research by taking advantage of linked resources and the exchange of data so that all stakeholders in the research ecosystem, can automate repetitive, boring, error-prone tasks.

Examples of Library Services implementing the FAIR principles

- If your local data repository shares metadata with other aggregators, it’s F for Findable.

- If you advocate for researchers to use ORCIDs and seek DOIs for research data outputs, it’s F for Findable

- If your institution mints DOIs for research datasets… that’s A for Accessible.

- If your institutional data repository enables metadata for harvest by an aggregator, that’s I for Interoperable.

- If you provide advice and consultation services for choosing licences for research data, that’s R for Reusable

Further reading following this lesson

TIB Hannover has provided the following FAIR guide with examples: TIB Hannover FAIR Principles Guide

The European Commission gives tips on implementing FAIR: Six Recommendations for Implementation of FAIR Practice

How does “FAIR” translate to your institution or workplace?

Group exercise Use an etherpad / whiteboard

-

Does your institutional data management policy refer to FAIR principles?

-

If you have a data management planning tool (eg DMP online) go through the mandatory fields and identify where there are FAIR teaching moments.

-

Compile a list of research management tools that your institution provides access to and brainstorm examples where these tools embody the FAIR data principles.

-

Use the FAIR data self assessment tool to help frame your answers.

Key Points

The FAIR principles set out how to make data more usable, by humans and machines, by making it Findable, Accessible, Interoperable and Reusable.

Librarians have key expertise in information management that can help researchers navigate the process of making their research more FAIR

Findable

Overview

Teaching: 0 min

Exercises: 0 minQuestions

What is a persistent identifier or PID?

What types of PIDs are there?

Objectives

Explain what globally unique, persistent, resolvable identifiers are and how they make data and metadata findable

Articulate what metadata is and how metadata makes data findable

Articulate how metadata can be explicitly linked to data and vice versa

Understand how and where to find data discovery platforms

Articulate the role of data repositories in enabling findable data

For data & software to be findable:

F1. (meta)data are assigned a globally unique and eternally persistent identifier or PID

F2. data are described with rich metadata

F3. (meta)data are registered or indexed in a searchable resource

F4. metadata specify the data identifier

Persistent identifiers (PIDs) 101

A persistent identifier (PID) is a long-lasting reference to a (digital or physical) resource:

- Designed to provide access to information about a resource even if the resource it describes has moved location on the web

- Requires technical, governance and community to provide the persistence

- There are many different PIDs available for many different types of scholarly resources e.g. articles, data, samples, authors, grants, projects, conference papers and so much more

Different types of PIDs

PIDs have community support, organizational commitment and technical infrastructure to ensure persistence of identifiers. They often are created to respond to a community need. For instance, the International Standard Book Number or ISBN was created to assign unique numbers to books, is used by book publishers, and is managed by the International ISBN Agency. Another type of PID, the Open Researcher and Contributor ID or ORCID (iD) was created to help with author disambiguation by providing unique identifiers for authors. The ODIN Project identifies additional PIDs along with Wikipedia’s page on PIDs.

Digital Object Identifiers (DOIs)

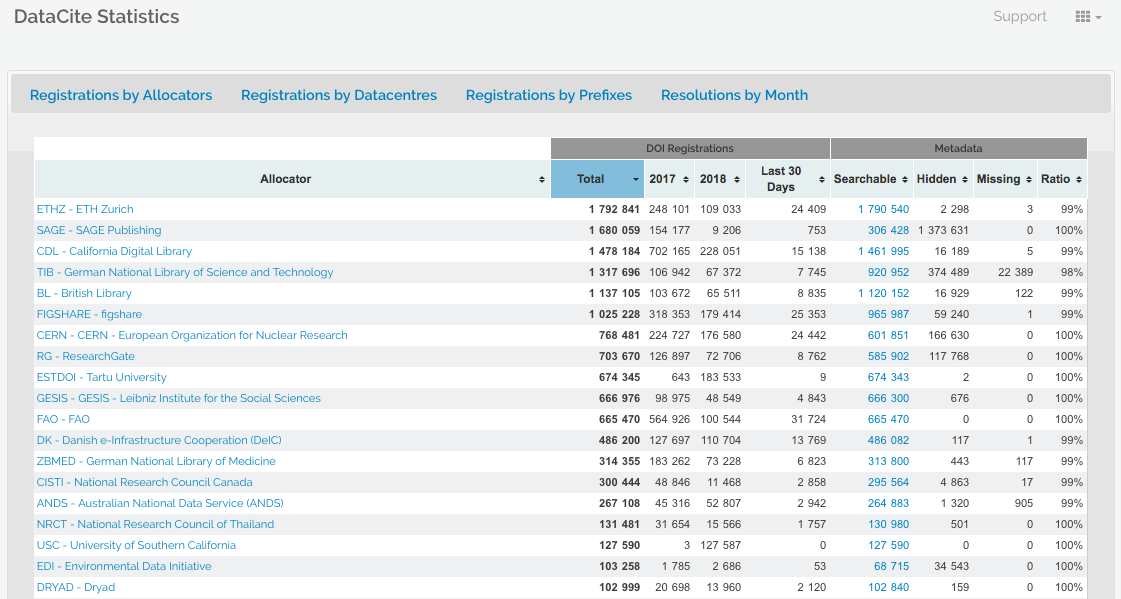

The DOI is a common identifier used for academic, professional, and governmental information such as articles, datasets, reports, and other supplemental information. The International DOI Foundation (IDF) is the agency that oversees DOIs. CrossRef and Datacite are two prominent not-for-profit registries that provide services to create or mint DOIs. Both have membership models where their clients are able to mint DOIs distinguished by their prefix. For example, DataCite features a statistics page where you can see registrations by members.

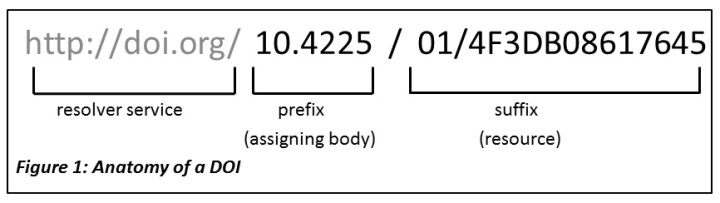

Anatomy of a DOI

A DOI has three main parts:

- Proxy or DOI resolver service

- Prefix which is unique to the registrant or member

- Suffix, a unique identifier assigned locally by the registrant to an object

In the example above, the prefix is used by the Australian National Data Service (ANDS) now called the Australia Research Data Commons (ARDC) and the suffix is a unique identifier for an object at Griffith University. DataCite provides DOI display guidance so that they are easy to recognize and use, for both humans and machines.

Challenge

arXiv is a preprint repository for physics, math, computer science and related disciplines. It allows researchers to share and access their work before it is formally published. Visit the arXiv new papers page for Machine Learning. Choose any paper by clicking on the ‘pdf’ link next to it. Now use control + F or command + F and search for ‘http’. Did the author use DOIs for their data and software?

Solution

Authors will often link to platforms such as GitHub where they have shared their software and/or they will link to their website where they are hosting the data used in the paper. The danger here is that platforms like GitHub and personal websites are not permanent. Instead, authors can use repositories to deposit and preserve their data and software while minting a DOI. Links to software sharing platforms or personal websites might move but DOIs will always resolve to information about the software and/or data. See DataCite’s Best Practices for a Tombstone Page.

Rich Metadata

More and more services are using common schemas such as DataCite’s Metadata Schema or Dublin Core to foster greater use and discovery. A schema provides an overall structure for the metadata and describes core metadata properties. While DataCite’s Metadata Schema is more general, there are discipline specific schemas such as Data Documentation Initiative (DDI) and Darwin Core.

Thanks to schemas, the process of adding metadata has been standardised to some extent but there is still room for error. For instance, DataCite reports that links between papers and data are still very low. Publishers and authors are missing this opportunity.

Challenges: Automatic ORCID profile update when DOI is minted RelatedIdentifiers linking papers, data, software in Zenodo

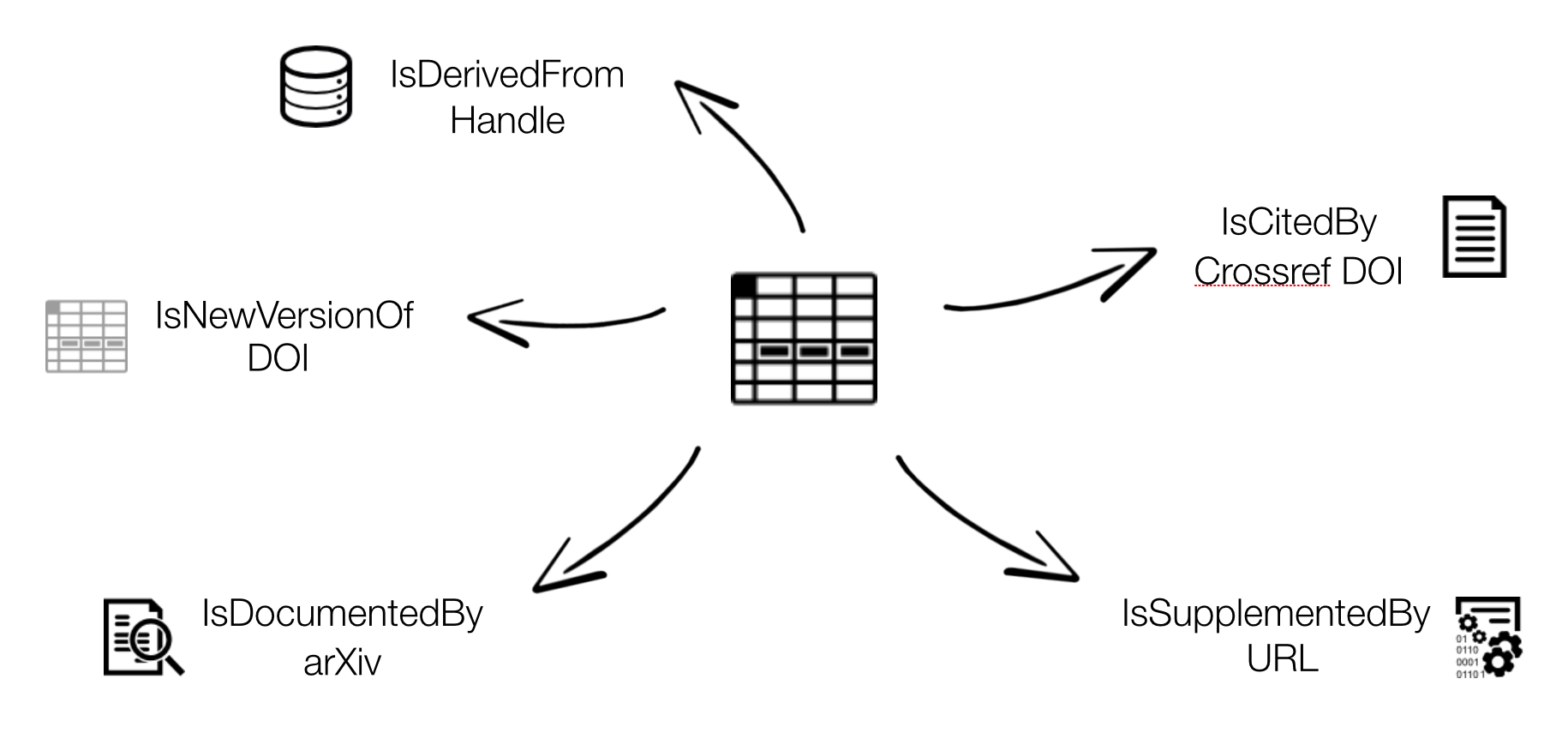

Connecting research outputs

DOIs are everywhere. Examples.

Resource IDs (articles, data, software, …) Researcher IDs Organisation IDs, Funder IDs Projects IDs Instrument IDs Ship cruises IDs Physical sample IDs, DMP IDs… videos images 3D models grey literature

https://support.datacite.org/docs/connecting-research-outputs

Bullet points about the current state of linking… https://blog.datacite.org/citation-analysis-scholix-rda/

Provenance?

Provenance means validation & credibility – a researcher should comply to good scientific practices and be sure about what should get a PID (and what not). Metadata is central to visibility and citability – metadata behind a PID should be provided with consideration. Policies behind a PID system ensure persistence in the WWW - point. At least metadata will be available for a long time. Machine readability will be an essential part of future discoverability – resources should be checked and formats should be adjusted (as far possible). Metrics (e.g. altmetrics) are supported by PID systems.

Publishing behaviour of researchers

According to:

Technische Informationsbibliothek (TIB) (conducted by engage AG) (2017): Questionnaire and Dataset of the TIB Survey 2017 on information procurment and pubishing behaviour of researchers in the natural sciences and engineering. Technische Informationsbibliothek (TIB). DOI: https://doi.org/10.22000/54

- responses from 1400 scientists in the natural sciences & engineering (across Germany)

- 70% of the researchers are using DOIs for journal publications

- less than 10% use DOIs for research data – 56% answered that they don’t know about the option to use DOIs for other publications (datasets, conference papers etc.) – 57% stated no need for DOI counselling services – 40% of the questioned researchers need more information – 30% cannot see a benefit from a DOI

Choosing the right repository

Ask your colleagues & collaborators Look for institutional repository at your own institution

determining the right repo for your reseearch data are kept safe in a secure environment data are regularly backed up and preserved (long-term) for future use data can be easily discovered by search engines and included in online catalogues intellectual property rights and licencing of data are managed access to data can be administered and usage monitored the visibility of data can be enhanced enables more use and citation citation of data increases researchers scientific reputation Decision for or against a specific repository depends on various criteria, e.g. Data quality Discipline Institutional requirements Reputation (researcher and/or repository) Visibility of research Legal terms and conditions Data value (FAIR Principles) Exit strategy (tested?) Certificate (based only on documents?)

Some recommendations: → look for the usage of PIDs → look for the usage of standards (DataCite, Dublin Core, discipline-specific metadata → look for licences offered → look for certifications (DSA / Core Trust Seal, DINI/nestor, WDS, …)

Searching re3data w/ exercise https://www.re3data.org/ Out of more than 2115 repository systems listed in re3data.org in July 2018, only 809 (less than 39 %!) state to provide a PID service, with 524 of them using the DOI system

Search open access repos http://v2.sherpa.ac.uk/opendoar/

FAIRSharing https://fairsharing.org/databases/

Data Journals

Another method available to researchers to cite and give credit to research data is to author works in data journals or supplemental approaches used by publishers, societies, disciplines, and/or journals.

Articles in data journals allow authors to:

- Describe their research data (including information about process, qualities, etc)

- Explain how the data can be reused

- Improve discoverability (through citation/linking mechanisms and indexing)

- Provide information on data deposit

- Allow for further (peer) review and quality assurance

- Offer the opportunity for further recognition and awards

Examples:

- Nature Scientific data - published by Nature and established in 2013

- Geoscience Data Journal - published by Wiley and established in 2012

- Journal of Open Archaeology Data - published by Ubiquity and established in 2011

- Biodiversity Data Journal - published by Pensoft and established in 2013.

- Earth System Science Data - published by Copernicus Publications and established in 2009

Also, the following study discusses data journals in depth and reviews over 100 data journals: Candela, L. , Castelli, D. , Manghi, P. and Tani, A. (2015), Data Journals: A Survey. J Assn Inf Sci Tec, 66: 1747-1762. doi:10.1002/asi.23358

How does your discipline share data

Does your discipline have a data journal? Or some other mechanism to share data? For example, the American Astronomical Society (AAS) via the publisher IOP Physics offers a supplment series as a way for astronomers to publish data.

List recent publications re: benefits of data sharing / software sharing

Questions: Is FAIRSharing vs re3data comparison slide from TIB findability slides needed here? Should we include recent thread about handle system vs DOIs in IRs (costs) Zenodo-GitHub linking is listed in another episode, right? Include guidance for Google schema indexing…

Notes:

Note about authors being proactive and working with the journals/societies to improve papers referencing data, software…

Tombstone

Key Points

First key point.

Accessible

Overview

Teaching: 0 min

Exercises: 0 minQuestions

Key question

Objectives

Understand what a protocol is

Understand authentication protocols and their role in FAIR

Articulate the value of landing pages

Explain closed, open and mediated access to data

For data & software to be accessible:

A1. (meta)data are retrievable by their identifier using a standardized communications protocol

A1.1 the protocol is open, free, and universally implementable

A1.2 the protocol allows for an authentication and authorization procedure, where necessary

A2. metadata remain accessible, even when the data are no longer available

What is a protocol?

Simply put, it’s an access method of exchanging data over a computer network. Each protocol has its rules for how data is formatted, compressed, checked for errors. Research repositories often use the OAI-PMH or REST API protocols to interface with data in the repository. The following image from TutorialEdge.net: What is a RESTful API by Elliot Forbes provides a useful overview of how RESTful interfaces work:

Zenodo offers a visual interface for seeing how formats such as DataCite XML will look like when requested for records such as the following record from the Biodiversity Literature Repository:

Formiche di Madagascar raccolte dal Sig. A. Mocquerys nei pressi della Baia di Antongil (1897-1898).

Wikipedia has a list of commonly used network protocols but check the service you are using for documentation on the protocols it uses and whether it corresponds with the FAIR Principles. For instance, see Zenodo’s Principles page.

Contributor information

Alternatively, for sensitive/protected data, if the protocol cannot guarantee secure access, an e-mail or other contact information of a person/data manager should be provided, via the metadata, with whom access to the data can be discussed. The DataCite metadata schema includes contributor type and name as fields where contact information is included. Collaborative projects such as THOR, FREYA, and ODIN are working towards improving the interoperability and exchange of metadata such as contributor information.

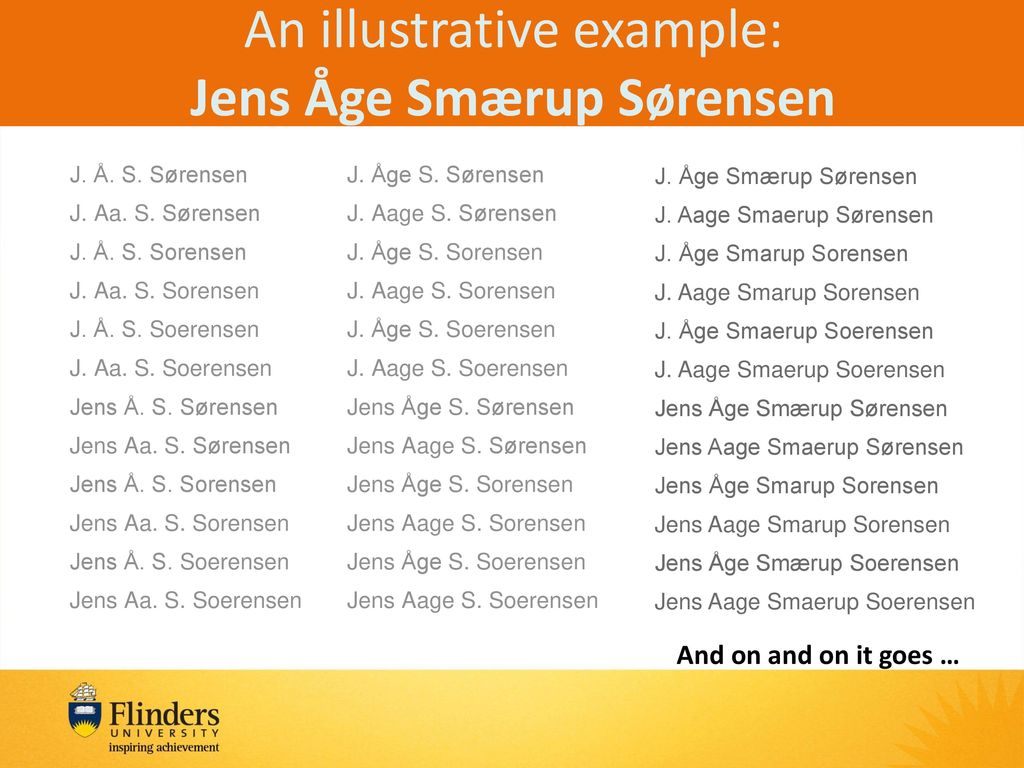

Author disambiguation and authentication

Across the research ecosystem, publishers, repositories, funders, research information systems, have recognized the need to address the problem of author disambiguation. The illustrative example below of the many variations of the name Jens Åge Smærup Sørensen demonstrations the challenge of wrangling the correct name for each individual author or contributor:

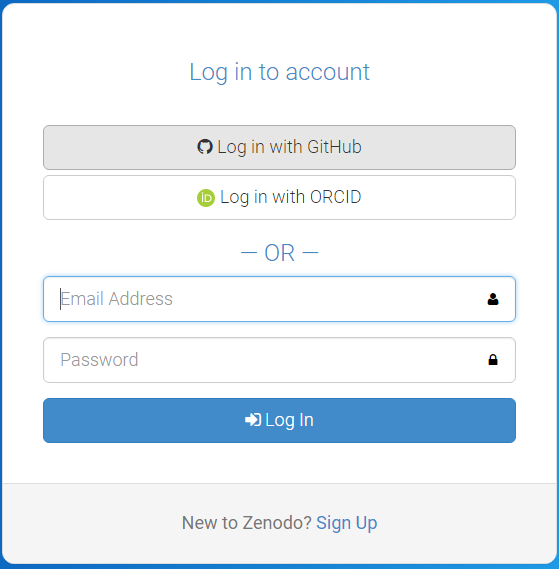

Thankfully, a number of research systems are now integrating ORCID into their authentication systems. Zenodo provides the login ORCID authentication option. Once logged in, your ORCID will be assigned to your authored and deposited works.

Exercise to create ORCID account and authenticate via Zenodo

- Register for an ORCID.

- You will receive a confirmation email. Click the link in the email to establish your unique 16-digit ORCID.

- Go to Zenodo and select Log in (if you are new to Zenodo select Sign up).

- Go to linked accounts and click the Connect button next to ORCID.

Next time you log into Zenodo you will be able to ‘Log in with ORCID’:

Understanding whether something is open, free, and universally implementable

ORCID features a principles page where we can assess where it lies on the spectrum of these criteria. Can you identify statements that speak to these conditions: open, free, and universally implemetable?

Answers:

- ORCID is a non-profit that collects fees from its members to sustain its operations

- Creative Commons CC0 1.0 Universal (CC0) license releases data into the public domain, or otherwise grants permission to use it for any purpose

- It is open to any organization and transcends borders

Challenge Questions:

- Where can you download the freely available data?

- How does ORCID solicit community input outside of its governance?

- Are the tools used to create, read, update, delete ORCID data open?

Tombstones, a very grave subject

There are a variety of reasons why a placeholder with metadata or tombstone of the removed research object exists including but not limited to staff removal, spam, request from owner, data center does not exist is still, etc. A tombstone page is needed when data and software is no longer accessible. A tombstone page communicates that the record is gone, why it is gone, and in case you really must know, there is a copy of the metadata for the record. A tombstone page should include: DOI, date of deaccession, reason for deaccession, message explaining the data center’s policies, and a message that a copy of the metadata is kept for record keeping purposes as well as checksums of the files. Zenodo offers us further explanation of the reasoning behind tombstone pages.

DataCite offers statistics where the failure to resolve DOIs after a certain number of attempts is reported (see DataCite statistics support pagefor more information). In the case of Zenodo and the GitHub issue above, the hidden field reveals thousands of records that are a result of spam.

If a DOI is no longer available and the data center does not have the resources to create a tombstone page, DataCite provides a generic tombstone page.

See the following tombstone examples:

- Zenodo tombstone: https://zenodo.org/record/1098445

- Figshare tombstone: https://figshare.com/articles/Climate_Change/1381402

Discussion of tombstones

Key Points

First key point.

Interoperable

Overview

Teaching: 0 min

Exercises: 0 minQuestions

What does interoperability mean?

What is a controlled vocabulary, a metadata schema and linked data?

How do I describe data so that humans and computers can understand?

Objectives

Explain what makes data and software (more) interoperable for machines

Identify widely used metadata standards for research, including generic and discipline-focussed examples

Explain the role of controlled vocabularies for encoding data and for annotating metadata in enabling interoperability

Understand how linked data standards and conventions for metadata schema documentation relate to interoperability

For data & software to be interoperable:

I1. (meta)data use a formal, accessible, shared, and broadly applicable language for knowledge representation

I2. (meta)data use vocabularies that follow FAIR principles

I3. (meta)data include qualified references to other (meta)data

What is interoperability for data and software?

Shared understanding of concepts, for humans as well as machines.

What does it mean to be machine readable vs human readable?

According to the Open Data Handbook:

Human Readable

“Data in a format that can be conveniently read by a human. Some human-readable formats, such as PDF, are not machine-readable as they are not structured data, i.e. the representation of the data on disk does not represent the actual relationships present in the data.”

Machine Readable

“Data in a data format that can be automatically read and processed by a computer, such as CSV, JSON, XML, etc. Machine-readable data must be structured data. Compare human-readable. Non-digital material (for example printed or hand-written documents) is by its non-digital nature not machine-readable. But even digital material need not be machine-readable. For example, consider a PDF document containing tables of data. These are definitely digital but are not machine-readable because a computer would struggle to access the tabular information - even though they are very human readable. The equivalent tables in a format such as a spreadsheet would be machine readable. As another example scans (photographs) of text are not machine-readable (but are human readable!) but the equivalent text in a format such as a simple ASCII text file can machine readable and processable.”

Software uses community accepted standards and platforms, making it possible for users to run the software. Top 10 FAIR things for research software

Describing data and software with shared, controlled vocabularies

See

- https://librarycarpentry.org/Top-10-FAIR//2018/12/01/research-data-management/#thing-8-controlled-vocabulary

- https://librarycarpentry.org/Top-10-FAIR//2019/09/06/astronomy/#thing-6-terminology

- https://librarycarpentry.org/Top-10-FAIR//2018/12/01/historical-research/#thing-6-controlled-vocabularies-and-ontologies

Representing knowledge in data and software

Beyond the PDF

Publishers, librarians, researchers, developers, funders, they have all been working towards a future where we can move beyond the PDF, from ‘static and disparate data and knowledge representations to richly integrated content which grows and changes the more we learn.” Research objects of the future will capture all aspects of scholarship: hypotheses, data, methods, results, presentations etc.) that are semantically enriched, interoperable and easily transmitted and comprehended. Attribution, Evaluation, Archiving, Impact https://sites.google.com/site/beyondthepdf/

Beyond the PDF has now grown into FORCE… Towards a vision where research will move from document- to knowledge-based information flows semantic descriptions of research data & their structures aggregation, development & teaching of subject-specific vocabularies, ontologies & knowledge graphs Paper of the Future https://www.authorea.com/users/23/articles/8762-the-paper-of-the-future to Jupyter Notebooks/Stencilia https://stenci.la/

Knowledge representation languages

provide machine-readable (meta)data with a well-established formalism structured, using discipline-established vocabularies / ontologies / thesauri (RDF extensible knowledge representation model, OWL, JSON LD, schema.org) offer (meta)data ingest from relevant sources (Document Information Dictionary or Extensible Metadata Platform from PDF) provide as precise & complete metadata as possible look for metrics to evaluate the FAIRness of a controlled vocabulary / ontology / thesaurus often do not (yet) exist assist in their development clearly identify relationships between datasets in the metadata (e.g. “is new version of”, “is supplement to”, “relates to”, etc.) request support regarding these tasks from the repositories in your field of study for software: follow established code style guides (thanks to @npch!)

Adding qualified references among data and software

support referencing metadata fields between datasets via a schema (relatedIdentifer, relationType)

Data Science and Digital Libraries => (research) knowledge graph(s) Scientific Data Management Visual Analytics to expose information within videos as keywords => av.tib.eu Scientific Knowledge Engineering => ontologies

Example: → Automatic ORCID profile update when DOI is minted DataCite – CrossRef – ORCID collaboration → PID of choice for RDM: Here: The Digital Object Identifier (DOI)

Detour: Replication / Reproducibility Crisis doi.org/10.1073/pnas.1708272114 doi.org/10.1371/journal.pbio.1002165 doi.org/10.12688/f1000research.11334.1 Examples of science failing due to software errors/bugs: figshare.com/authors/Neil_Chue_Hong/96503

“[…] around 70% of research relies on software […] if almost a half of that software is untested, this is a huge risk to the reliability of research results.” Results from a US survey about Research Software Engineers URSSI.us/blog/2018/06/21/results-from-a-us-survey-about-research-software-engineers (Daniel S. Katz, Sandra Gesing, Olivier Philippe, and Simon Hettrick) Olivier Philippe, Martin Hammitzsch, Stephan Janosch, Anelda van der Walt, Ben van Werkhoven, Simon Hettrick, Daniel S. Katz, Katrin Leinweber, Sandra Gesing, Stephan Druskat. 2018. doi.org/10.5281/zenodo.1194669

Code style guides & formatters (thanks to Neil Chu Hong) faster than manual/menial formatting code looks the same, regardless of author can be automated enforced to keep diffs focussed PyPI.org/project/pycodestyle, /black, etc. ROpenSci packaging guide style.tidyverse.org Google.GitHub.io/styleguide

If others can use your code, convey the meaning of updates with SemVer.org (CC BY 3.0) “version number[ changes] convey meaning about the underlying code” (Tom Preston-Werner)

Exercise Python & R Carpentries lessons

Linked Data

Top 10 FAIR things: Linked Open Data

Linked data example Triples - RDF - SPARQL Wikidata exercise

Standards: https://fairsharing.org/standards/ schema.org: http://schema.org/

ISA framework: ‘Investigation’ (the project context), ‘Study’ (a unit of research) and ‘Assay’ (analytical measurement) - https://isa-tools.github.io/

Example of schema.org: rOpenSci/codemetar

Modularity http://bioschemas.org

codemeta croswalks to other standards https://codemeta.github.io/crosswalk/

DCAT https://www.w3.org/TR/vocab-dcat/

Using community accepted code style guidelines such as PEP 8 for Python (PEP 8 itself is FAIR)

Scholix - related indentifiers - Zenodo example linking data/software to papers https://dliservice.research-infrastructures.eu/#/ https://authorcarpentry.github.io/dois-citation-data/01-register-doi.html

Should vocabularies from reusable episode be moved here?

Key Points

Understand that FAIR is about both humans and machines understanding data.

Interoperability means choosing a data format or knowledge representation language that helps machines to understand the data.

Reusable

Overview

Teaching: 0 min

Exercises: 0 minQuestions

Key question

Objectives

Explain machine readability in terms of file naming conventions and providing provenance metadata

Explain how data citation works in practice

Understand key components of a data citation

Explore domain-relevant community standards including metadata standards

Understand how proper licensing is essential for reusability

Know about some of the licenses commonly used for data and software

For data & software to be reusable:

R1. (meta)data have a plurality of accurate and relevant attributes

R1.1 (meta)data are released with a clear and accessible data usage licence

R1.2 (meta)data are associated with their provenance

R1.3 (meta)data meet domain-relevant community standards

What does it mean to be machine readable vs human readable?

According to the Open Data Handbook:

Human Readable “Data in a format that can be conveniently read by a human. Some human-readable formats, such as PDF, are not machine-readable as they are not structured data, i.e. the representation of the data on disk does not represent the actual relationships present in the data.”

Machine Readable “Data in a data format that can be automatically read and processed by a computer, such as CSV, JSON, XML, etc. Machine-readable data must be structured data. Compare human-readable.

Non-digital material (for example printed or hand-written documents) is by its non-digital nature not machine-readable. But even digital material need not be machine-readable. For example, consider a PDF document containing tables of data. These are definitely digital but are not machine-readable because a computer would struggle to access the tabular information - even though they are very human readable. The equivalent tables in a format such as a spreadsheet would be machine readable.

As another example scans (photographs) of text are not machine-readable (but are human readable!) but the equivalent text in a format such as a simple ASCII text file can machine readable and processable.”

File naming best practices

A file name should be unique, consistent and descriptive. This allows for increased visibility and discoverability and can be used to easily classify and sort files. Remember, a file name is the primary identifier to the file and its contents.

Do’s and Don’ts of file naming:

Do’s:

- Make use of file naming tools for bulk naming such as Ant Renamer, RenameIT or Rename4Mac.

- Create descriptive, meaningful, easily understood names no less than 12-14 characters.

- Use identifiers to make it easier to classify types of files i.e. Int1 (interview 1)

- Make sure the 3-letter file format extension is present at the end of the name (e.g. .doc, .xls, .mov, .tif)

- If applicable, include versioning within file names

- For dates use the ISO 8601 standard: YYYY-MM-DD and place at the end of the file number UNLESS you need to organise your files chronologically.

- For experimental data files, consider using the project/experiment name and conditions in abbreviations

- Add a README file in your top directory which details your naming convention, directory structure and abbreviations

-

- When combining elements in file name, use common special letter case patterns such as Kebab-case, CamelCase, or Snake_case, preferably use hyphens (-) or underscores (_)

Don’ts:

- When combining elements in file name, use common special letter case patterns such as Kebab-case, CamelCase, or Snake_case, preferably use hyphens (-) or underscores (_)

- Avoid naming files/folders with individual persons names as it impedes handover and data sharing.

- Avoid long names

- Avoid using spaces, dots, commas and special characters (e.g. ~ ! @ # $ % ^ & * ( ) ` ; < > ? , [ ] { } ‘ “)

- Avoid repetition for ex. Directory name Electron_Microscopy_Images, then you don’t need to name the files ELN_MI_Img_20200101.img

Examples:

- Stanford Libraries guidance on file naming is a great place to start.

- Dryad example:

- 1900-2000_sasquatch_migration_coordinates.csv

- Smith-fMRI-neural-response-to-cupcakes-vs-vegetables.nii.gz

- 2015-SimulationOfTropicalFrogEvolution.R

Directory structures and README files

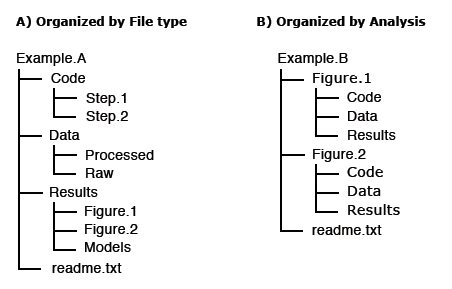

A clear directory structure will make it easier to locate files and versions and this is particularly important when collaborating with others. Consider a hierarchical file structure starting from broad topics to more specific ones nested inside, restricting the level of folders to 3 or 4 with a limited number of items inside each of them.

The UK data services offers an example of directory structure and naming: https://ukdataservice.ac.uk/manage-data/format/organising.aspx

For others to reuse your research, it is important to include a README file and to organize your files in a logical way. Consider the following file structure examples from Dryad:

It is also good practice to include README files to describe how the data was collected, processed, and analyzed. In other words, README files help others correctly interpret and reanalyze your data. A README file can include file names/directory structure, glossary/definitions of acronyms/terms, description of the parameters/variables and units of measurement, report precision/accuracy/uncertainty in measurements, standards/calibrations used, environment/experimental conditions, quality assurance/quality control applied, known problems, research date information, description of relationships/dependencies, additional resources/references, methods/software/data used, example records, and other supplemental information.

-

Dryad README file example: https://doi.org/10.5061/dryad.j512f21p

-

Awesome README list (for software): https://github.com/matiassingers/awesome-readme

-

Different Format Types https://data.library.virginia.edu/data-management/plan/format-types/

Disciplinary Data Formats

Many disciplines have developed formal metadata standards that enable re-use of data; however, these standards are not universal and often it requires background knowledge to indentify, contextualize, and interpret the underlying data. Interoperability between disciplines is still a challenge based on the continued use of custom metadata schmes, and the development of new, incompatiable standards. Thankfully, DataCite is providing a common, overarching metadata standard across disciplinary datasets, albeit at a generic vs granular level.

In the meantime, the Research Data Alliance (RDA) Metadata Standards Directory - Working Group developed a collaborative, open directory of metadata standards, applicable to scientific data, to help the research community learn about metadata standards, controlled vocabularies, and the underlying elements across the different disciplines, to potentially help with mapping data elements from different sources.

Exercise/Quiz?

Metadata Standards Directory

Features: Standards, Extensions, Tools, and Use Cases

Quality Control

Quality control is a fundamental step in research, which ensures the integrity of the data and could affect its use and reuse and is required in order to identify potential problems.

It is therefore essential to outline how data collection will be controlled at various stages (data collection,digitisation or data entry, checking and analysis).

Versioning

In order to keep track of changes made to a file/dataset, versioning can be an efficient way to see who did what and when, in collaborative work this can be very useful.

A version control strategy will allow you to easily detect the most current/final version, organize, manage and record any edits made while working on the document/data, drafting, editing and analysis.

Consider the following practices:

- Outline the master file and identify major files for instance; original, pre-review, 1st revision, 2nd revision, final revision, submitted.

- Outline strategy for archiving and storing: Where to store the minor and major versions, how long will you retain them accordingly.

- Maintain a record of file locations, a good place is in the README files

Example: UK Data service version control guide: https://www.ukdataservice.ac.uk/manage-data/format/versioning.aspx

Research vocabularies

Research Vocabularies Australia https://vocabs.ands.org.au/ AGROVOC & VocBench http://aims.fao.org/vest-registry/vocabularies/agrovoc Dimensions Fields of Research https://dimensions.freshdesk.com/support/solutions/articles/23000012844-what-are-fields-of-research-

Versioning/SHA https://swcarpentry.github.io/git-novice/reference

Binder - executable environment, making your code immediately reproducible by anyone, anywhere. https://blog.jupyter.org/binder-2-0-a-tech-guide-2017-fd40515a3a84

Narrative & Documentation Jupyter Notebooks https://www.contentful.com/blog/2018/06/01/create-interactive-tutorials-jupyter-notebooks/

Licenses Licenses rarely used From GitHub https://blog.github.com/2015-03-09-open-source-license-usage-on-github-com/

Lack of licenses provide friction, understanding of whether can reuse Peter Murray Project - ContentMine - The Right to Read is the Right to Mine - OpenMinTed Creative Commons Wizard and GitHub software licensing wizards (highlight attribution, non commercial)

Lessons to teach with this episode Data Carpentry - tidy data/data organization with spreadsheets https://datacarpentry.org/lessons/ Library Carpentry - intro to data/tidy data

Exercise? Reference Management w/ Zotero or other

demo: import Zenodo.org/record/1308061 into Zotero demo: RStudio > Packages > Update, run PANGAEA example, then install updates https://tibhannover.github.io/2018-07-09-FAIR-Data-and-Software/FAIR-remix-PANGAEA/index.html

Useful content for Licenses Note: TIB Hannover Slides https://docs.google.com/presentation/d/1mSeanQqO0Y2khA8KK48wtQQ_JGYncGexjnspzs7cWLU/edit#slide=id.g3a64c782ff_1_138

Additional licensing resources: Choose an open source license: https://choosealicense.com/ 4 Simple recommendations for Open Source Software https://softdev4research.github.io/4OSS-lesson/ Use a license: https://softdev4research.github.io/4OSS-lesson/03-use-license/index.html Top 10 FAIR Imaging https://librarycarpentry.org/Top-10-FAIR//2019/06/27/imaging/ Licensing your work: https://librarycarpentry.org/Top-10-FAIR//2019/06/27/imaging/#9-licensing-your-work The Turing Way a Guide for reproducible Research: https://the-turing-way.netlify.app/welcome Licensing https://the-turing-way.netlify.app/reproducible-research/licensing.html The Open Science Training Handbook: https://open-science-training-handbook.gitbook.io/book/ Open Licensing and file formats https://open-science-training-handbook.gitbook.io/book/open-science-basics/open-licensing-and-file-formats#6-open-licensing-and-file-formats DCC How to license research data https://www.dcc.ac.uk/guidance/how-guides/license-research-data

Exercise- Thanks, but no Thanks!

In groups of 2-3 discuss and note down;

- Have you ever received data you couldn’t use? & why not?

- Have you tried replicating an experiment, yours or someone else? What challenges did you face?

Key Points

First key point.

Assessment

Overview

Teaching: 10 min

Exercises: 50 minQuestions

How can I assess the FAIRness of myself, an organisation, a service, a community… ?

Which FAIR assessment tools exist to understand how FAIR you are?

Objectives

Assess the current FAIRness level of myself, organisation, service, community…

Know about available tools for assessing FAIRness.

Understand the next steps you can take to being FAIRer.

Reasons for assessment

FAIR is a journey. Technology and the way people work is shifting often and what might be FAIR today might not be months, years from now. A FAIR assessment now is a snapshot in time. Nevertheless, individuals, organizations, disciplines, services, countries, and communities will look to how FAIR they are. The reasons are various, including gaining a better understanding, comparing with others, making improvements, and participating further in the scholarly ecosystem, to name a handful. Ultimately, an assessment can be a helpful guide on the path to becoming more FAIR.

Mirror, mirror on the wall, who is the FAIRest one of all?

Mirror, mirror on the wall, who is the FAIRest one of all? - In March 2020, Theuringen FDM-TAGE offered awards to the FAIRest datasets based on the FAIR principles. The FAIRest Dataset winners were announced in June 2020. What is FAIR about the winning datasets? Is there anything else that can be done to make them FAIRer?

FAIR is a vision, NOT a standard

The FAIR principles are a way of reaching for best data and software practices, coming to a convergence on what those are, and how to get there. They are NOT rules. They are NOT a standard. They are NOT a requirement. The principles were not meant to be prescriptive but instead offer a vision to optimise data/software sharing and reuse by humans and machines.

Inconsistent interpretations

The lack of information on how to implement the FAIR principles have led to inconsistent interpretations. Jacobsen, A., de Miranda Azevedo, R., Juty, N., Batista, D., Coles, S., Cornet, R., … & Goble, C. (2020). FAIR principles: interpretations and implementation considerations describes implementation considerations.

Types of assessment

Depending on your needs, whether you want to assess yourself, a service, your organization or community, or even your country or region, FAIR assessment or evaluation tools are available to help guide you in your path towards FAIR betterment. The following are some resources and exercises to help you get started.

Individual assessment

How FAIR are you? The FAIRsFAIR project has developed an assessment tool called FAIR-Aware that both helps you understand the principles and also how you can improve the FAIRness of your research. Before taking the assessment, have a target dataset/software in mind to prepare you for the questions which include questions about yourself and 10 questions about FAIR. Each question provides additional information and guidance and helps you assess your current FAIRness level along with potential actions to take. The assessment takes 10 to 30 minutes to complete depending on your familiarity with the subject and issues covered.

Challenge

Encourage your workshop participants to review the episodes in this FAIR lesson and take the FAIR-Aware assessment ahead of time. In person (or virtual), ask the participants to split up into groups and to highlight some of their key questions/findings from the FAIR-Aware assessment. Ask them to note their questions/findings/anything else in the session’s collaborative notes. After a duration, ask the groups to return to the main group and call on each group (leader) to summarise their discussion. Synthesise some of the key points and discuss next steps on how participants can address their FAIRness moving forward.

Alternatively, the Australian Research Data Commons (ARDC) FAIR data assessment tool and/or the How FAIR are your data? checklist by Jones and Grootveld are also available and can be substituted for the FAIR-Aware assessment tool.

Evaluate the FAIRness of digital resources

How FAIR is your service and the digital resources you share? How can your service enable greater machine discoverability and (re)use of its digital resoruces? Evaluation of your service’s FAIRness lies on a continuum based on the behaviors and norms of your community. Frameworks and tools to assess services are currently under development and what options are available should be paired with the evaulation of what makes sense to your community.

FAIR Evaluation Services

The FAIR Evaluation Service is available to assess the FAIRness of your digital resources. Developed by the Maturity Indicator Authoring Group, FAIR Maturity Indicators are available to test your service via a submission process. The rationale for the Service are explained in Cloudy, increasingly FAIR; revisiting the FAIR Data guiding principles for the European Open Science Cloud. To get started, the Group can also be reached via their Service.

As a service provider, for example a data repository, you might want to assess the FAIRness of datasets in your systems. You can do this by using one of the resources at FAIRassist or you can start your assessment manually (as a group exercise). Some infrastructure providers have provided overviews of how their services enable FAIR.

- Zenodo offers an overview of how the service responds to the FAIR principles.

- Figshare also published a statement paper on how it supports the FAIR principles.

Challenge

Encourage your workshop participants to review the episodes in this FAIR lesson and then review the FAIR principles responses/statements from Zenodo and Figshare above before the workshop. Again, ahead of the workshop, ask the participants to develop similar responses/statements for a service at their organisation, in their community. An outline with brief bullet points is best. Pre-assign workshop participants to groups and ask them to share their responses/statements with each other. Then in person (or virtual), ask the participants to split up into their pre-assigned groups and to discuss each other’s responses/statements. Ask them to note their questions/findings/anything else in the session’s collaborative notes. After a duration, ask the groups to return to the main group and call on each group (leader) to summarise their discussion. Synthesise some of the key points and discuss next steps on how participants can address their FAIRness moving forward.

Quantifying FAIR

In a recent DataONE webinar titled Quatifying FAIR, Jones and Slaughter describe tests that have been conducted to assess the FAIRness of digital resources across their services. The MetaDIG tool is referenced, used to check the quality of metadata in these services. Based on this work, DataONE also lists a Make your data FAIR tool as coming soon

Community assessment

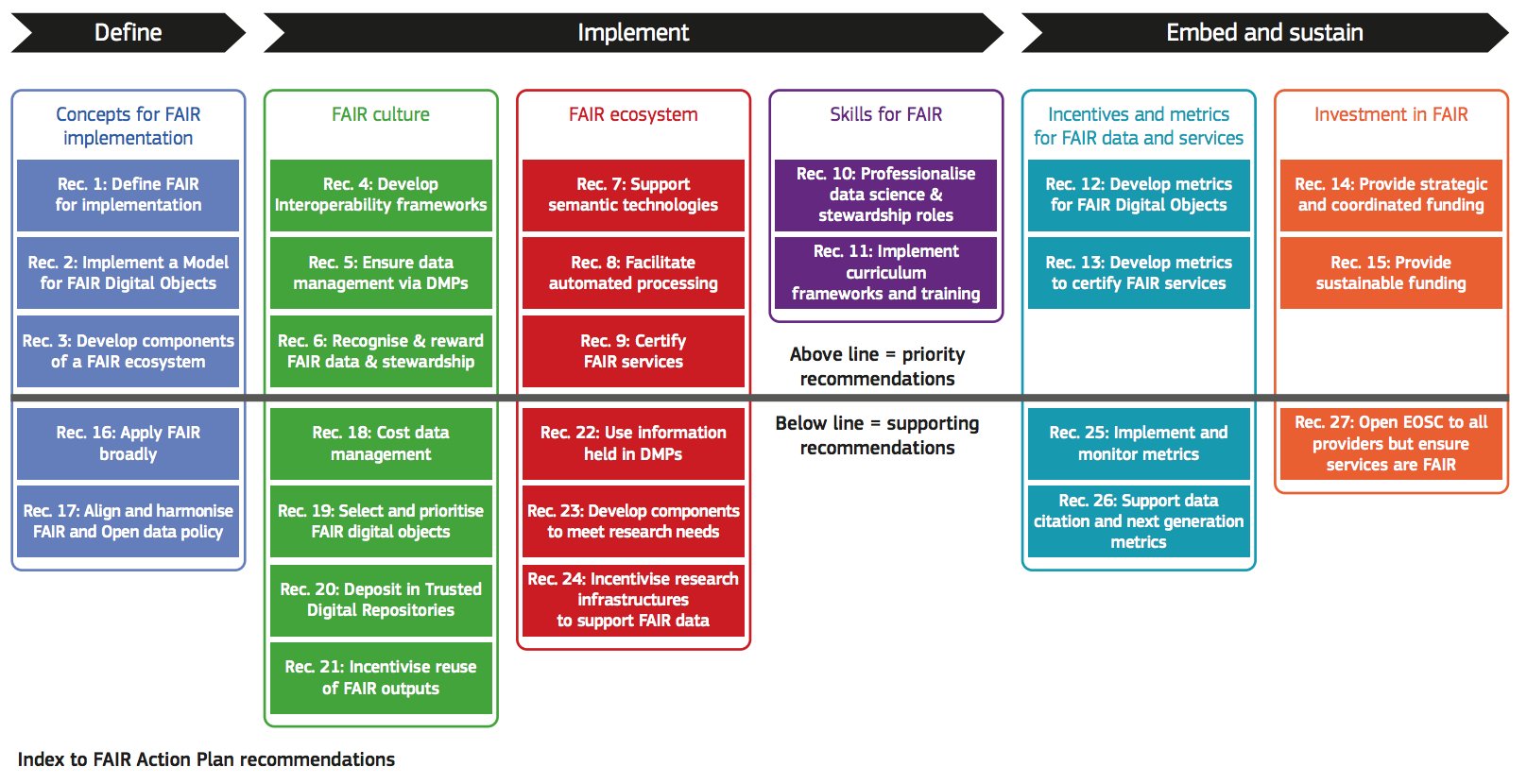

Communities can also assess how FAIR they are and develop goals and/or action plans for advancing FAIR. Communities can range from topical to regional and even organisational. A recently published report from the Directorate-General for Research and Innovation (European Commission) titled “Turning FAIR into reality” is an invaluable resource for creating action plans and turning FAIR into reality for communities. The report includes a survey and analysis of what is needed to implement FAIR, concrete recommendations and actions for stakeholders, and example case studies to learn from. Ultimately, the report serves as a useful framework for mapping a community’s next steps towards a FAIRer future.

Challenge

Encourage your workshop participants to first read:

Collins, Sandra, et al. “Turning FAIR into reality: Final report and action plan from the European Commission expert group on FAIR data.” (2018). https://op.europa.eu/en/publication-detail/-/publication/7769a148-f1f6-11e8-9982-01aa75ed71a1

Leverage the recommendations (pictured below) and organise group discussions on these themes. Ask the participants to brainstorm initial responses to these themes ahead of the workshop and collect their initial responses in a collaborative document, structured by the themes. During the workshop, ask the participants to join their groups based on the themes they initially responded to and discuss each other’s responses. Ask them to note their questions/findings/anything else in the session’s collaborative notes. After a duration, ask the groups to return to the main group and call on each group (leader) to summarise their discussion. Synthesise some of the key points and discuss next steps on how participants can address their FAIRness moving forward.

Challenge

Alternatively, start your community off on the path to FAIR by setting up an initial study group. The group’s goal, to scan, and possibly survey, work that has been done by community members (or like communities) on example/case studies, policies, recommendation, guidance, etc to collect resources to help inform future FAIR discussions/initiatives. Consider structuring your group work to produce guidance, e.g. Top 10 FAIR Data and Software Things:

Paula Andrea Martinez, Christopher Erdmann, Natasha Simons, Reid Otsuji, Stephanie Labou, Ryan Johnson, … Eliane Fankhauser. (2019, February). Top 10 FAIR Data & Software Things. Zenodo. http://doi.org/10.5281/zenodo.3409968

Other assessment tools

To see a list of additional resources for the assessment and/or evaluation of digital objects against the FAIR principles, see FAIRassist.

Planning

Data and software management plans are also a helpful tool for planning out how you/your group will manage data and software throughout your project but they also provide a mechanism for assessment at different checkpoints. Revisiting your plan at different checkpoints allows you to review how well you are doing, incorporate findings, and make improvements. This allows your plan to evolve, be more actionable, and less static.

Resources and examples include:

Data:

- Data Stewardship Wizard

- DMPonline

- DMPTool

- ICPSR example pans

- LIBER Research Data Management Plan (DMP) Catalogue

Software:

- ELIXIR Software Management Plan

- SSI Software Management Plans

- CLARIAH Guidelines for Software Quality

- EURISE Network Software Quality Checklist

Challenge

Use one of the resources above to draft a plan for your research project (as an individual and/or group). As an individual, ask a colleague to review your draft, provide feedback, and discuss. As a group, outline the plan questions in a collaborative document and work together to draft responses, then discuss together as a group. Consider publishing your plan to share with others.

Resources

This is a developing area, so if you have any resources that you would like to share, please add them to this lesson via a pull request or GitHub issue.

Key Points

Assessments and plans are helpful tools for understanding next steps/action plans for becoming more FAIR.

Software

Overview

Teaching: 0 min

Exercises: 0 minQuestions

Key question

Objectives

The objective of this lesson is to get learners up to speed on how the FAIR principles apply to software, and to make learners aware of accepted best practices.

Applying FAIR to software

The FAIR principles have been developed with research data in mind, however they are relevant to all digital objects resulting from the research process - which includes software. The discussion around FAIRification of software - particularly with respect to how the principles can be adapted, reinterpreted, or expanded - is ongoing. This position paper (https://doi.org/10.3233/DS-190026) elaborates on this discussion and provides an overview on how the 15 FAIR Guiding Principles can be applied to software.

BTW what do we mean by software here? Software can refer to programming scripts and packages such as those for R & Python, as well as programs and webtools/webservices such as …… For the most part, this lesson focuses on the former.

Note/Acknowledgements

This lesson draft, complied during the FAIR lesson sprint @CarpentryCon2020, draws heavily from the Top 10 FAIR Data & Software Things + FAIR Software Guide (see reference list).

Findable

For software to be findable,

- it should be accompanied with sufficiently rich metadata and persistent identifiers.

-

Software should be well documented to to increase findability and eventually, reusability. This metadata can start with a minimum set of information that includes a short description and meaningful keywords. This documentation be further improved by applying metadata standards such as those from CodeMeta (https://codemeta.github.io/terms/) or DataCite.

-

Software should be registered in a dedicated registry such as Research Software Directory, rOpenSci project, Zenodo. These registries will usually request the previously mentioned metadata descriptors, and they are optimized to show up in search engine results. question to be resolved: how is a registry different from a repository?

-

When attaching identifiers to software, they should be unqiue and persistent. They should point only the one version and location of your software. A software registry usually provides a PID, otherwise you can obtain one from another organization and include it in your metadata yourself. See: Software Hertigage archive.

-

Your software can also be hosted/deposited in an open and publicly accessible repository, preferably with version control and citation guidelines. Examples: Zenodo or GitHub (see GitHub doc on citing code, but note the issue about attaching identifiers and the ‘permanence’ of GitHub). Still have to check the difference between registries and repositories.

Accessible

For software to be accessible,

- software metadata should be in machine and human readable formats.

- software and metadata should be deposited in a trusted community-approved repository.

-

What does it mean to make metadata machine and human readable? This should be covered in a previous lesson and referred back here…

-

The (executable) software and metadata should be publicly accessible and downloadable. These downloadable and executable files can be hosted or deposited in a repository / registry, as well as project websites.

Interoperable

For softare to be interoperable,

- community accepted standards and platforms should be used, making it possible for other users to run the software.

-

Interoperability is increased when there is documentation that outlines functions of the software. This means providing clear and concise descriptions of all the operations available with the software, including input and output functions and the data types associated with them.

-

If community standards are available, input and output formats should adhere to them. This makes it easy to exchange data between software (the data itself will be better linked too).

Reusable

For software to be reusable,

- it should have a clear licence and documentation.

-

Software should be sufficiently documented beyond metadata. This could include instructions, troubleshooting, statements on dependencies, examples or tutorials.

-

Include a license. This informs potential users on how they may/may not (re)use the software.

-

Include a citation guideline, if you’d like to receive credit or acknowledgement for your work.

-

Combine best practices for software development with FAIR. This would include working out of a repository with version control (which allows for tracking changes and transparency), following code standards, building modual code etc.

-

In addition to 4, you can use a software quality checklist. There are several available and you can select which is most suitable for your software. A good checklist will include items which allow for a granular evaluation of the software, explains the rationale behind the check, and explains how to implement the check. It can cover documentation, testing, standardization of code etc. You quality checklist could be maintained in your README file.

References

- position paper: https://doi.org/10.3233/DS-190026

- Top 10 Fair Data & Software Things: https://librarycarpentry.org/Top-10-FAIR//2018/12/01/research-software/

- FAIR Software guide: https://fair-software.eu/

- Netherlands eScience Center FAIR Software lesson outline: https://escience-academy.github.io/2020-09-08-4tu-fair-software/

- CarpentryCon2020 FAIR Software course: https://www.youtube.com/watch?time_continue=321&v=-x81mpkQAWo&feature=emb_logo / Etherpad: https://pad.carpentries.org/cchome-FAIR-software

- FSCI2020 FAIR Data course (session 3 slides): https://osf.io/jx2tp/

Lessons to teach in connection with this section and exercises

Software Carpentry: Version Control with Git or Library Carpentry: Intro to Git Making your Code Citeable Does an exercise or lesson exist that we can point to involving Software Heritage?

Key Points

First key point.